NLP Learning Series: Part 2 - Conventional Methods for Text Classification

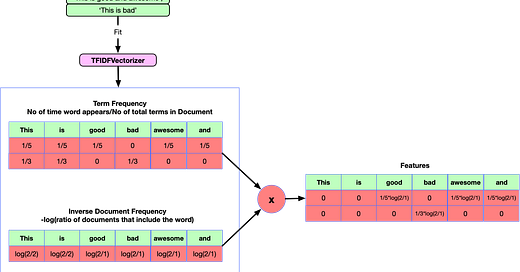

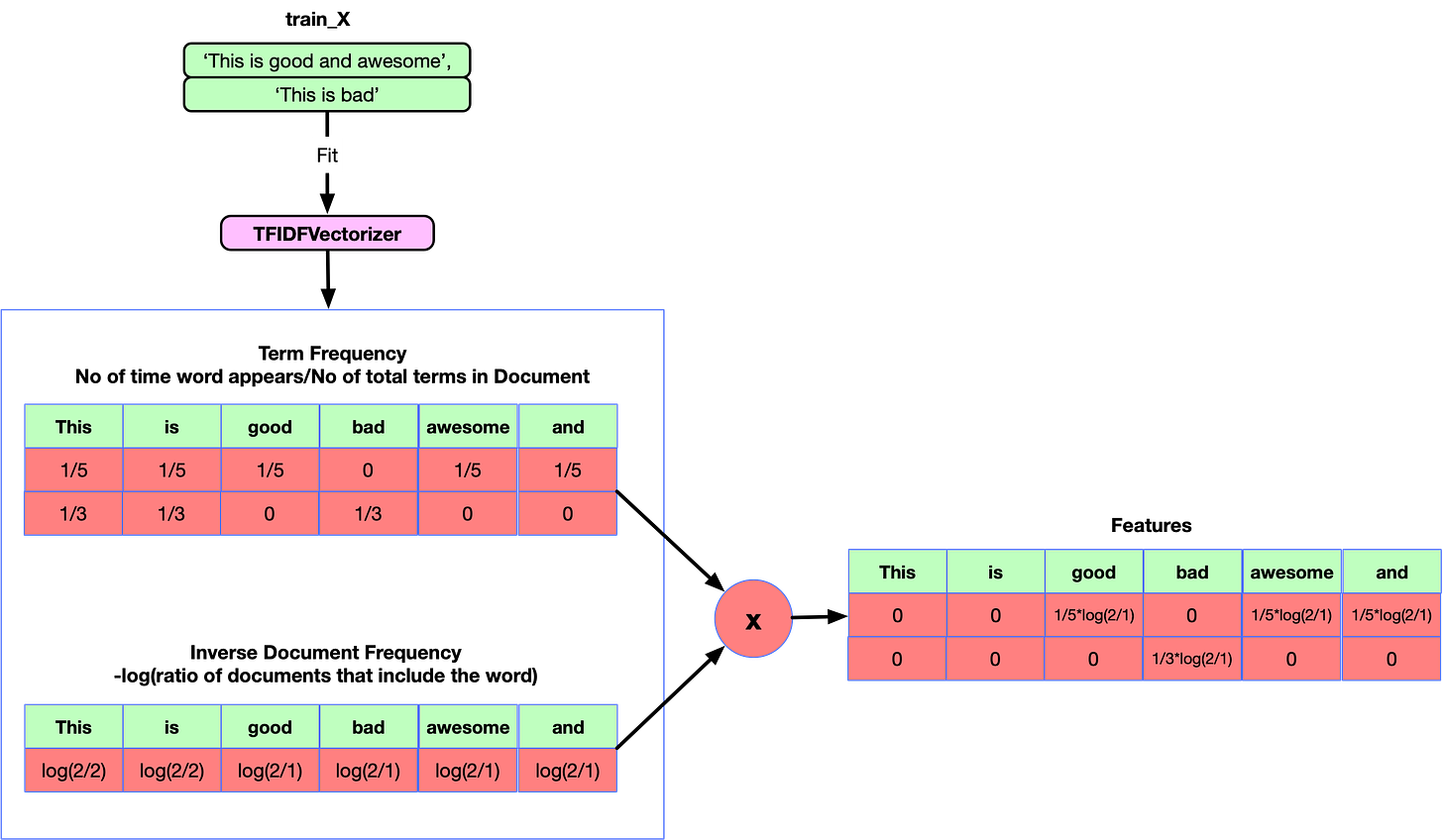

This is the second post of the NLP Text classification series. To give you a recap, recently I started up with an NLP text classification competition on Kaggle called Quora Question insincerity challenge. And I thought to share the knowledge via a series of blog posts on text classification. The first post talked about the various preprocessing techniques that work with Deep learning models and increasing embeddings coverage. In this post, I will try to take you through some basic conventional models like TFIDF, Count Vectorizer, Hashing etc. that have been used in text classification and try to access their performance to create a baseline. We will delve deeper into Deep learning models in the third post which will focus on different architectures for solving the text classification problem. We will try to use various other models which we were not able to use in this competition like ULMFit transfer learning approaches in the fourth post in the series.

As a side note: If you want to …

Keep reading with a 7-day free trial

Subscribe to MLWhiz | AI Unwrapped to keep reading this post and get 7 days of free access to the full post archives.